Published in AI & Small Business

SoftBank's AI "Central Kitchen" Solves the Right Problem the Wrong Way

I Run An AI Content Company In Japan. SoftBank Wants To Be My Data Supplier.

I read the Chunichi Shimbun's report (in Japanese and paywalled) on March 26th and my first reaction was: finally, someone is trying to fix the plumbing. My second reaction was: why does it have to be SoftBank?

Here is what happened. SoftBank is building a platform to sit between content owners (newspapers, publishers) and AI companies. The platform takes articles, processes them into data formats that AI services can consume, and handles the licensing. Content owners get a share of the revenue when their content is used. AI companies get data with clear provenance, no legal grey areas, no risk of training on someone's copyrighted work without permission.

The trial launches this summer. The formal version is planned for spring 2027. It starts with text and will eventually expand to images and video.

I run Kafkai, an AI content generation company based in Tokyo. This announcement puts SoftBank directly in the supply chain between the people who create content and companies like mine that use AI to generate it. I am simultaneously a potential customer, a potential data source, and a participant in the ecosystem this platform would reshape.

So I have opinions.

The Problem Is real. I Have Been Writing About It.

I want to be clear about something first: the problems SoftBank is trying to solve are genuine. I have been writing about them for over a year.

Problem one: AI companies scrape copyrighted content without paying creators. I covered this in detail when I wrote about the Kadrey vs Meta and Bartz vs Anthropic copyright lawsuits. Meta deliberately chose pirated books over licensed content for training data. Anthropic's case raised the question of whether AI learning from copyrighted material is "transformative use." Japan's Article 30-4 technically allows using copyrighted material for AI training if the use is "not for enjoyment purposes," but that legal permission does not make it ethical. As I wrote then: machines can only copy what humans have already made. Compensating the humans should be the baseline, not an afterthought.

Problem two: unreliable training data produces unreliable AI. The Chunichi Shimbun article makes an important point about this. If AI systems train on unverified social media posts alongside professional journalism, the output quality suffers. I wrote about this in my piece on AI hallucinations, where studies show AI tools produce incorrect information 15% to 20% of the time, and that number climbs dramatically in specialised domains. Content provenance is not just a copyright issue. It is a quality issue.

Problem three: Japan's data is locked away. When I attended the Venture Cafe Global Gathering panel in Tokyo and wrote about Japan's AI potential, one of the clearest findings was that Japanese corporations sit on massive datasets that remain locked in corporate silos, inaccessible to startups and researchers. The data exists. The access does not.

SoftBank's platform addresses all three of these. Content creators get paid. Data provenance is tracked. Locked-away content becomes accessible to AI developers.

So I should be applauding. And I partly am. But the way they are building it worries me more than the problems it solves.

The Central Kitchen Problem

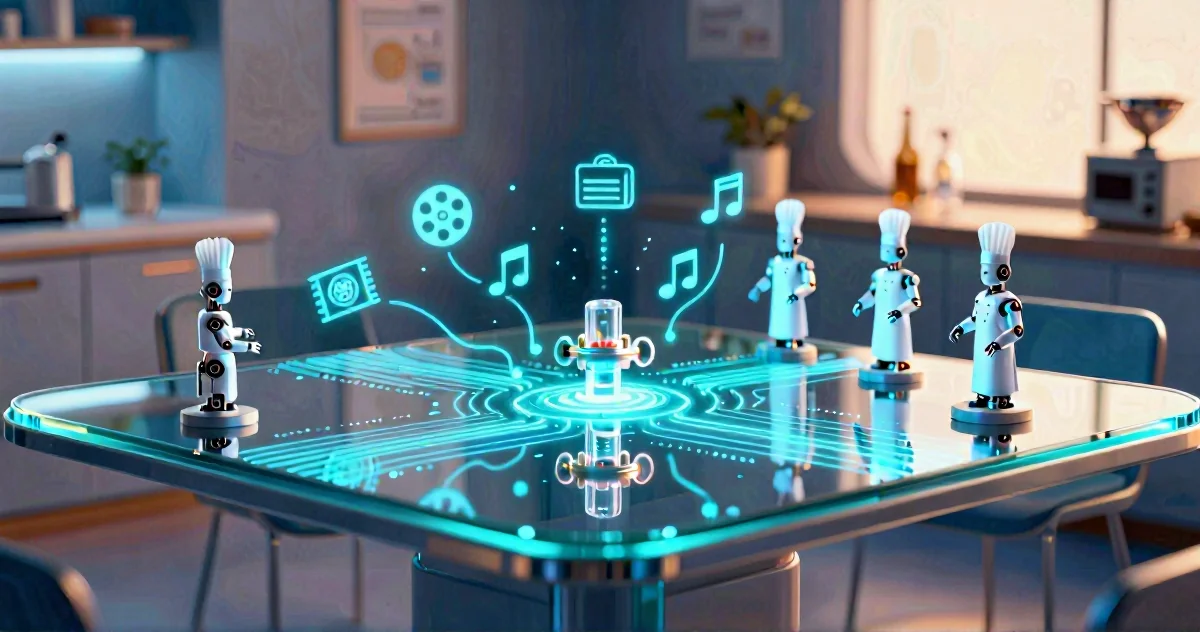

The Chunichi Shimbun article uses an analogy that I think reveals more than SoftBank intended. It compares the platform to a "central kitchen" (セントラルキッチン), like the kind that processes food ingredients in bulk and delivers ready-to-use portions to restaurant chains.

The analogy is accurate. That is the problem.

A central kitchen is brilliant if you are running a chain of family restaurants that all serve the same hamburg steak. You standardise the ingredients, optimise the processing, and deliver uniform portions at scale. Efficiency through uniformity.

AI development is not a chain restaurant business.

Different AI companies need fundamentally different things from content. A company building a medical AI needs articles processed and annotated differently from one building a customer service chatbot. A startup training a model for legal document analysis has different requirements from one working on creative writing assistance. A central kitchen optimises for the throughput of the middleman, not for the diverse needs of the people eating the food.

The article also says the processed data is used for "seasoning" (味付け) AI content. I have cooked in enough kitchens to know that whoever controls the seasoning controls the flavour. SoftBank is not offering raw ingredients for AI companies to prepare as they see fit. They are offering pre-processed, pre-seasoned portions. That is a very different proposition.

Who Controls The Kitchen Controls The Menu

Here is where my concerns become structural:

-

A single intermediary creates a chokepoint. If SoftBank decides certain content is not worth aggregating, or certain publishers are not worth partnering with, that content simply does not enter the AI ecosystem. One company's business decisions become the filter for an entire country's AI training data.

-

Revenue sharing terms will be dictated by SoftBank, not by content creators. Japanese newspaper publishers are not negotiating from a position of strength. Print circulation has been declining for years. Digital revenue has not replaced it. When SoftBank offers revenue sharing, it looks like a lifeline. But once publishers are locked in, their leverage to renegotiate shrinks. They go from being scraped for free to being paid whatever SoftBank decides is fair.

-

AI companies become dependent on a single data supplier. I wrote about the importance of data sovereignty in Competing in the Realm of AI: Japan's Imperative, referencing the Nvidia CEO's advice: "You need to own your own data, build your own AI factories." Replacing scattered, unlicensed scraping with a single licensed supplier is an improvement in legality. It is not an improvement in independence.

-

Startups and smaller AI companies may be priced out. SoftBank's platform will need to generate revenue. The pricing will reflect SoftBank's economics, not the budgets of early-stage AI companies. If this becomes the dominant way to access Japanese content for AI training, it could widen the gap between well-funded AI labs and the startups that actually drive innovation.

Compare this with what the National Institute of Informatics (NII) did when they released llm-jp-3-172b-instruct3, a 172-billion parameter Japanese language model. NII took the opposite approach: open data, open tools, open documentation. Over 1,900 researchers collaborated through the GENIAC project. The result was sovereign AI infrastructure built through community effort, not corporate gatekeeping.

SoftBank's central kitchen is corporate gatekeeping with a revenue-sharing label on it.

What Would Actually Work

I am not arguing that the status quo is acceptable. AI companies scraping content without compensation is a problem. Unreliable training data is a problem. Locked-away datasets are a problem. But the solution matters as much as the diagnosis.

-

Multiple competing platforms, not one. Competition keeps pricing fair, ensures content creators have options, and prevents a single gatekeeper from deciding what enters the AI ecosystem. If SoftBank builds one platform and Fujitsu builds another and NTT builds a third, the market works. If only SoftBank builds one, it is a tollbooth.

-

Open standards for content provenance. The provenance problem is real, but the solution should be a standard, not a proprietary platform. Think of it like email: an open protocol that any provider can implement, not a walled messaging app controlled by one company.

-

Creator-controlled licensing. Content owners should set the terms for how their work is used, at what price, and for what purposes. The platform should be a marketplace where creators have agency, not a bulk purchasing operation where SoftBank negotiates on their behalf.

-

Government involvement in setting the framework. Japan has been developing what I have previously called a "third way" governance model for AI, balancing between America's commercial-first approach and Europe's regulation-first approach. This is exactly the kind of issue where government should define the rules of the game rather than letting one company design the marketplace.

-

Transparency in data processing. If content is being processed by the "central kitchen," content owners should see exactly how their work was transformed, who consumed it, and how the revenue was calculated. Black-box processing by a middleman is not accountability.

The Uncomfortable Question For Me

I have to be honest about my own position here, because it is complicated.

As a content creator, I want compensation and provenance tracking for my work. SoftBank's platform promises that.

As someone running an AI company, I want reliable, legally clear training data. The platform promises that too.

But as someone who has spent the past two years arguing that Japan needs open, decentralised, sovereign AI infrastructure, I cannot cheer for centralisation disguised as facilitation.

SoftBank is right that the current situation is broken. AI companies scraping without compensation, no provenance tracking, unreliable data polluting AI outputs. All of that needs fixing.

But building a middleman monopoly to fix it is like solving a traffic jam by installing a toll booth. The road still has the same number of lanes. There is just one more entity taking a cut.

Japan's AI future depends on how it structures access to data. Get it right, and you build a diverse, competitive ecosystem where startups and established companies alike can innovate. Get it wrong, and you build a system where one company controls the pipeline and everyone else pays rent.

I know which version I would rather build in.

Related Articles

78% of Companies Now Use AI

AI adoption surged from 55% to 88% in just two years — one of the fastest technology adoption curves ever recorded. Yet 70–85% of AI projects fail to meet expectations and 42% of companies abandoned most initiatives in 2025. What separates the companies benefiting from AI from those just using it?

AI Business Intelligence for Small Businesses: Competing with Big Players

A story-driven look at how small businesses are using AI-powered business intelligence to understand their data, make smarter decisions, and compete with large companies that once dominated their markets.

When Your CTO Joins the Competition: Mesolitica's "Future is Solo" Pivot and the AI Market's Brutal Reality

When product-market fit crumbles under AI market reality, smart founders pivot. Mesolitica's 'Future is Solo' strategy transforms technical setbacks into sustainable revenue by selling the process, not just the product. This approach highlights a crucial lesson for any startup facing today's AI challenges.